RLVR might be disproportionately bad at science

Mirrored from Dwarkesh Podcast for archival readability. Support the source by reading on the original site.

I’m writing up some threads that we explored in my interview with Michael Nielson. That episode was one of my favorite.

The organizing question from my interview with Michael Nielson was, “How do we recognize scientific progress?” It’s especially relevant to thinking about what it would take for AI to close the RL verification loop on scientific discovery. But it’s also a surprisingly mysterious and elusive question when thinking about the history of human science.

Some people have this idea that AI is going to be disproportionately good at making scientific breakthroughs. The reason they think this is that 1. Science is ‘verifiable’, 2. AI is absolutely crushing domains that have a tight verification loop - coding, math, etc - because you can RL on these loops.

But the history of human science shows that the verification loop for theories can be on the order of decades and centuries, and even then experiments do not definitely rule out alternatives: Ancient Athenians dismissed Aristarchus (2nd century BC) on heliocentrism because it would imply stellar parallax. The first successful measurement of stellar parallax was in 1838, achieved by Friedrich Wilhelm Bessel.

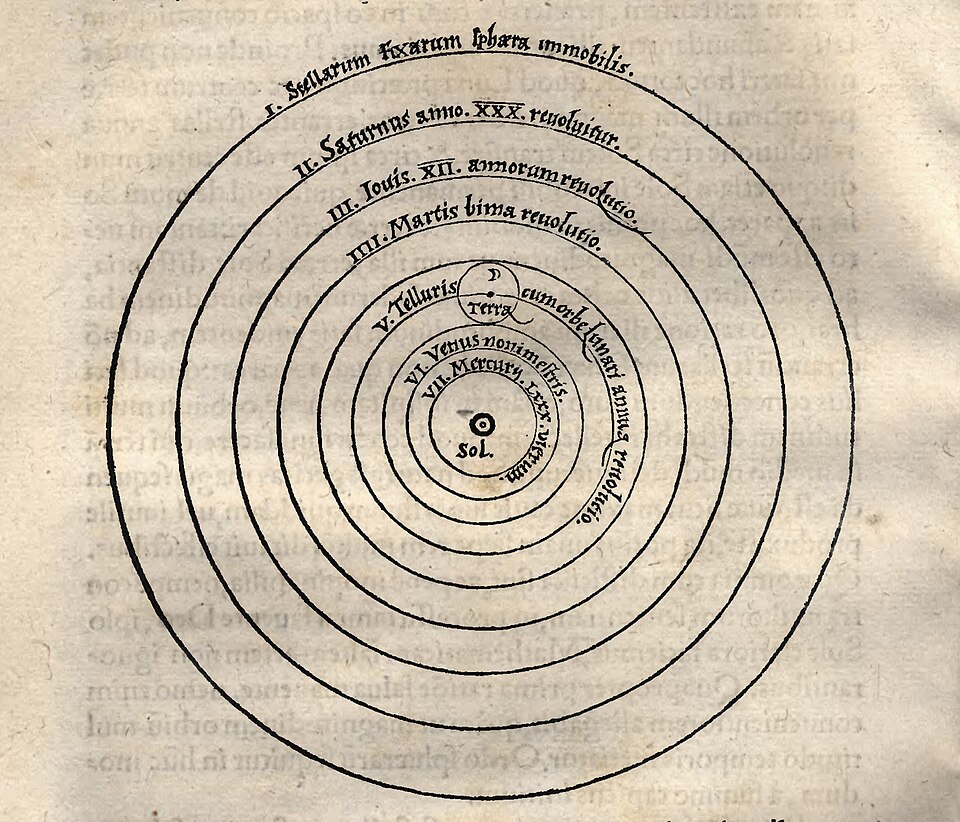

What we know today as the better theory can often actually make worse predictions: it’s well known that Copernicus’s model of circular orbits around the sun was less accurate than Ptolemy’s geocentric model, which had accumulated millenia of correcting epicycles. What is not well known is that Copernicus’s theory wasn’t even simpler (Ptolemy’s model interpreted the true elliptical nature of orbits using an equant trick where other planets are not moving in uniform circular motion around Earth exactly, but rather an off center point. Copernicus didn’t like this, because it violated his Platonic heuristics - so he discarded the quant trick, which led to a less parsimonious model, since Copernicus had to add more epicycles and epicyclets to make up for it.)

So in what sense was it a better theory in 1543? In some sense, it wasn’t! You couldn’t have known ex ante that heliocentrism married with Kepler’s 3 laws (1619) is a much cleaner and more accurate theory, or that there’s a very beautiful unification of heliocentric orbits and terrestrial gravity (Newton in 1686).

There was one ex ante reason that you should have preferred Copernicus in 1543: his theory required retrograde motion as a natural consequence of his theory, whereas for Ptolemy it was an ad hoc addition. Even more impressively, his theory, developed in 1543, actually predicted the phases of Venus before they were observed by Galileo in 1610. But both of these things were also implied by Brahe’s model, which had set the sun to orbit the earth and then all the planets to orbit the sun.

Under a naive falsificationist framework, you’d have to wait until Stellar parallax was observed in 1838 to know that Brahe was wrong. But obviously the scientific community was able to make progress faster than this. There is some mixture of judgment and heuristics in the progress of science that we don’t even understand well enough to actually articulate, much less codify into an RL loop.

Or consider the case of the discovery of Neptune in 1846. Uranus deviated from its predicted Newtonian path. Le Verrier predicted that an unknown perturbing planet must exist, calculated its mass and orbit, and Neptune was found almost exactly where predicted.

But the Neptune story is symmetric to a failure case. Mercury had an anomalous precession, where the ellipse that shows its orbit would rotate 43 arcseconds more per century than should be implied by the impact of other planets using Newtonian mechanics. This led astronomers to speculate that there’s an unknown planet Vulcan within Mercury’s orbit. But it was resolved in 1915 with Einstein’s General Relativity.

A proper Newtonian would still proceed with the research agenda, but modify it as follows. First, you predict some unknown planet. If it can’t be found, you say it’s so small, it must require a bigger telescope, and you build a bigger telescope. And if you still can’t find it, maybe there’s a cloud of cosmic dust occluding it. If still not found, maybe the satellite’s instruments are being screwed by some unknown magnetic field, and you send a new satellite. At each of these steps, had you discovered a new planet, or some unknown cosmic dust, or some new magnetic field, that would have been a sensational victory for Newtonians.

Ex ante, this is not unreasonable to do! It is only after decades or maybe centuries of patchwork that we can then analyze, are we simply adding epicycles, or is this theoretical framework progressive, in that it makes predictions we wouldn’t otherwise be able to.

What do these examples illustrate? That ex ante it is almost impossible to determine which research programs are progressive (will predict and explain unanticipated new phenomenon) and which are regressive (need to be contorted repeatedly to accommodate seemingly disconfirming new phenomenon).

But the verification loop is often extremely long and weirdly hostile, and even then, experiments do not definitely rule out alternatives (see the discussion in the Nielson episode about how physicist contemporaneous with the 1880s Michelson-Morley experiments thought that it simply ruled out a particular theory of ether. Only Einstein made the full conceptual leap to discard the ether altogether).

This means that big conceptual breakthroughs cannot be easily verified. They are recognized decades or centuries later, when it turns out they were much more productive than the alternatives available. What this means for AI for science is that 1. You can’t easily train an RL loop for big conceptual breakthroughs.

And 2. the society of AI scientists will still need individual AI instances that have idiosyncratic biases and heuristics, and to pursue them unrelentingly for decades on end - for example, like the one Einstein had in insisting that there shouldn’t be some arbitrary inertial reference frame. There should be dedicated people to keep a bunch of dormant research agendas alive in case they turn out to be productive upon further investigation. To understand the kind of intransigent dedication to hypotheses that is needed to preserve correct scientific idea - even in the face of disconfirming evidence - consider the following story: In 1815, Prout hypothesized that the atomic weights of all pure chemical elements are whole numbers, because experimentally, most elements seem to come out like this. But there’s many anomalies - for example Chlorine’s atomic weight is measured at 35.5. And so Prout’s school claimed that maybe the chemical substance in which these elements appeared were impure. But there seemed to be no chemical reaction that could get rid of the impurities. And then they said, maybe it’s fractions of full atomic weights - but the closer you measure, the less natural the fractions seem to get - Chlorine goes from 35.5 to 35.46. It takes until almost a century later for people to realize that these measurements are showing multiple isotopes of the same element, which can be separated physically, but have no chemical distinguishing characteristics.

What I’m trying to say is that ex ante, one couldn’t have known which research program would be more productive. We need to invest in all of them concurrently. But that investment looks like a bunch of different individual scientists being super unreasonable and obstinate about propping up their preferred research agenda.

What does the parallel discovery of a deep idea like Darwinism tell us?

The Origin of Species was published in 1859. Principia Mathematica was published in 1687, two centuries earlier. Conceptually, it seems like natural selection is much simpler than the theory of gravity. A contemporary of Darwin’s, Thomas Huxley, read the Origin of Species and said, “How extremely stupid not to have thought of that!” Nobody ever said the same for not beating Newton to the Principia. I wonder if the reason this happened is that, while Darwin’s theory is conceptually simpler, it cannot be decisively tested. The evidence is circumstantial, retrospective, and cumulative. There’s no equivalent of Newton running the numbers on the moon’s orbital period and radius, and confirming that it corresponds to his equations.

Also you need this concept of deep time. Charles Lyell published the Principles of Geology in 1830, which gave Darwin the vast stretches of time that natural selection needed. And the fact that Darwin and Wallace basically arrived at evolution at the same time (and both credited Lyell’s contribution) does suggest that these underrated intellectual footholds were quite important (geology, paleontology of ancient extinct species which showed intermediate species (in some cases between apes and humans), biogeography from voyages and age of colonization, more sophisticated artificial selection like pigeon breeding). It’s interesting that an idea whose essence must have been obvious to herders and parents for thousands of years actually required many millennia of ancillary intuition pumps to fully spell out.

The pattern of parallel discovery in science and technology is very interesting, and seems to contradict this vibe that certain innovations could have happened earlier much earlier than they really did.

Discussion (0)

Sign in to join the discussion. Free account, 30 seconds — email code or GitHub.

Sign in →No comments yet. Sign in and be the first to say something.