Anthropic's Claude Managed Agents can now "dream," sort of

Mirrored from Ars Technica — AI for archival readability. Support the source by reading on the original site.

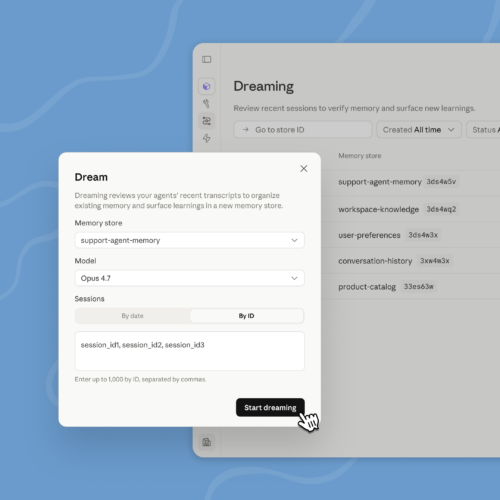

SAN FRANCISCO—At its Code with Claude developers' conference, Anthropic has introduced what it calls "dreaming" to Claude Managed Agents. Dreaming, in this case, is a process of going over recent events and identifying specific things that are worth storing in "memory" to inform future tasks and interactions.

Dreaming is a feature that is currently in research preview and limited to Managed Agents on the Claude Platform. Managed Agents are a higher-level alternative to building directly on the Messages API that Anthropic describes as a "pre-built, configurable agent harness that runs in managed infrastructure." It's intended for situations where you want multiple agents working on a task or project to some end point over several minutes or hours.

Anthropic describes dreaming as a scheduled process, in which sessions and memory stores are reviewed, and specific memories are curated. This is important because context windows are limited for LLMs, and important information can be lost over lengthy projects. On the chat side of things, many models use a process called compaction, whereby lengthy conversations are periodically analyzed, and the models attempt to remove irrelevant information from the context window while keeping what's actually important for the ongoing conversation, project, or task.

More from Ars Technica — AI

-

AI invades Princeton, where 30% of students cheat—but peers won't snitch

May 13

-

Altman forced to confront claims at OpenAI trial that he's a prolific liar

May 13

-

Anthropic blames dystopian sci-fi for training AI models to act “evil”

May 13

-

Rivian adds a new onboard AI assistant to its latest software update

May 13

Discussion (0)

Sign in to join the discussion. Free account, 30 seconds — email code or GitHub.

Sign in →No comments yet. Sign in and be the first to say something.