Lil'Log (Lilian Weng)

51 articles archived · Visit source ↗ · RSS

-

Lil'Log (Lilian Weng) research 12mo ago

Why We Think

Special thanks to John Schulman for a lot of super valuable feedback and direct edits on this post. Test time compute ( Graves et al. 2016 , Ling, et al. 2017 , Cobbe et al. 2021 ) and Chain-of-thought (CoT) ( Wei et al. 2022 , Nye et al. 2021 ), have led to significant…

25 -

Lil'Log (Lilian Weng) research 17mo ago

Reward Hacking in Reinforcement Learning

Reward hacking occurs when a reinforcement learning (RL) agent exploits flaws or ambiguities in the reward function to achieve high rewards, without genuinely learning or completing the intended task. Reward hacking exists because RL environments are often imperfect, and it is…

26 -

Lil'Log (Lilian Weng) research 22mo ago

Extrinsic Hallucinations in LLMs

Hallucination in large language models usually refers to the model generating unfaithful, fabricated, inconsistent, or nonsensical content. As a term, hallucination has been somewhat generalized to cases when the model makes mistakes. Here, I would like to narrow down the…

4 -

Lil'Log (Lilian Weng) research 25mo ago

Diffusion Models for Video Generation

Diffusion models have demonstrated strong results on image synthesis in past years. Now the research community has started working on a harder task—using it for video generation. The task itself is a superset of the image case, since an image is a video of 1 frame, and it…

12 -

Lil'Log (Lilian Weng) research 27mo ago

Thinking about High-Quality Human Data

[Special thank you to Ian Kivlichan for many useful pointers (E.g. the 100+ year old Nature paper “Vox populi”) and nice feedback. 🙏 ] High-quality data is the fuel for modern data deep learning model training. Most of the task-specific labeled data comes from human…

23 -

Lil'Log (Lilian Weng) research 31mo ago

Adversarial Attacks on LLMs

The use of large language models in the real world has strongly accelerated by the launch of ChatGPT. We (including my team at OpenAI, shoutout to them) have invested a lot of effort to build default safe behavior into the model during the alignment process (e.g. via RLHF ).…

5 -

Lil'Log (Lilian Weng) research 35mo ago

LLM Powered Autonomous Agents

Building agents with LLM (large language model) as its core controller is a cool concept. Several proof-of-concepts demos, such as AutoGPT , GPT-Engineer and BabyAGI , serve as inspiring examples. The potentiality of LLM extends beyond generating well-written copies, stories,…

26 -

Lil'Log (Lilian Weng) research 38mo ago

Prompt Engineering

Prompt Engineering , also known as In-Context Prompting , refers to methods for how to communicate with LLM to steer its behavior for desired outcomes without updating the model weights. It is an empirical science and the effect of prompt engineering methods can vary a lot among…

4 -

Lil'Log (Lilian Weng) research 40mo ago

The Transformer Family Version 2.0

Many new Transformer architecture improvements have been proposed since my last post on “The Transformer Family” about three years ago. Here I did a big refactoring and enrichment of that 2020 post — restructure the hierarchy of sections and improve many…

14 -

Lil'Log (Lilian Weng) research 40mo ago

Large Transformer Model Inference Optimization

[Updated on 2023-01-24: add a small section on Distillation .] Large transformer models are mainstream nowadays, creating SoTA results for a variety of tasks. They are powerful but very expensive to train and use. The extremely high inference cost, in both time and memory, is a…

30 -

Lil'Log (Lilian Weng) research 44mo ago

Some Math behind Neural Tangent Kernel

Neural networks are well known to be over-parameterized and can often easily fit data with near-zero training loss with decent generalization performance on test dataset. Although all these parameters are initialized at random, the optimization process can consistently lead to…

38 -

Lil'Log (Lilian Weng) research 47mo ago

Generalized Visual Language Models

Processing images to generate text, such as image captioning and visual question-answering, has been studied for years. Traditionally such systems rely on an object detection network as a vision encoder to capture visual features and then produce text via a text decoder. Given a…

7 -

Lil'Log (Lilian Weng) research 49mo ago

Learning with not Enough Data Part 3: Data Generation

Here comes the Part 3 on learning with not enough data (Previous: Part 1 and Part 2 ). Let’s consider two approaches for generating synthetic data for training. Augmented data . Given a set of existing training samples, we can apply a variety of augmentation, distortion and…

8 -

Lil'Log (Lilian Weng) research 51mo ago

Learning with not Enough Data Part 2: Active Learning

This is part 2 of what to do when facing a limited amount of labeled data for supervised learning tasks. This time we will get some amount of human labeling work involved, but within a budget limit, and therefore we need to be smart when selecting which samples to label.

29 -

-

Lil'Log (Lilian Weng) research 56mo ago

How to Train Really Large Models on Many GPUs?

[Updated on 2022-03-13: add expert choice routing .] [Updated on 2022-06-10]: Greg and I wrote a shorted and upgraded version of this post, published on OpenAI Blog: “Techniques for Training Large Neural Networks”

11 -

Lil'Log (Lilian Weng) research 58mo ago

What are Diffusion Models?

[Updated on 2021-09-19: Highly recommend this blog post on score-based generative modeling by Yang Song (author of several key papers in the references)]. [Updated on 2022-08-27: Added classifier-free guidance , GLIDE , unCLIP and Imagen . [Updated on 2022-08-31: Added latent…

36 -

Lil'Log (Lilian Weng) research 60mo ago

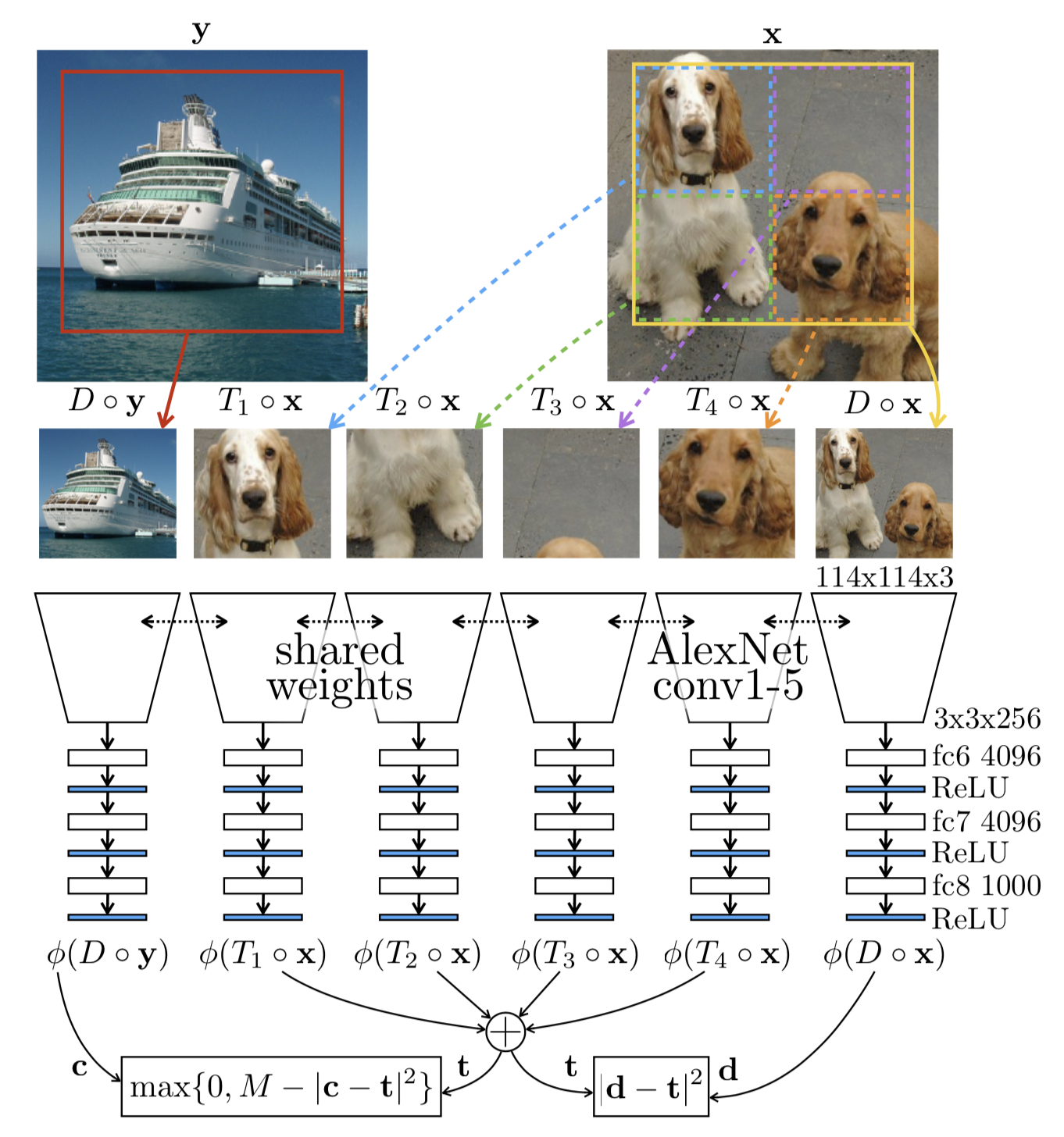

Contrastive Representation Learning

The goal of contrastive representation learning is to learn such an embedding space in which similar sample pairs stay close to each other while dissimilar ones are far apart. Contrastive learning can be applied to both supervised and unsupervised settings. When working with…

37 -

Lil'Log (Lilian Weng) research 62mo ago

Reducing Toxicity in Language Models

Large pretrained language models are trained over a sizable collection of online data. They unavoidably acquire certain toxic behavior and biases from the Internet. Pretrained language models are very powerful and have shown great success in many NLP tasks. However, to safely…

12 -

Lil'Log (Lilian Weng) research 65mo ago

Controllable Neural Text Generation

[Updated on 2021-02-01: Updated to version 2.0 with several work added and many typos fixed.] [Updated on 2021-05-26: Add P-tuning and Prompt Tuning in the “prompt design” section.] [Updated on 2021-09-19: Add “unlikelihood training” .]

33 -

Lil'Log (Lilian Weng) research 67mo ago

How to Build an Open-Domain Question Answering System?

[Updated on 2020-11-12: add an example on closed-book factual QA using OpenAI API (beta). A model that can answer any question with regard to factual knowledge can lead to many useful and practical applications, such as working as a chatbot or an AI assistant🤖. In this post, we…

30 -

Lil'Log (Lilian Weng) research 70mo ago

Neural Architecture Search

Although most popular and successful model architectures are designed by human experts, it doesn’t mean we have explored the entire network architecture space and settled down with the best option. We would have a better chance to find the optimal solution if we adopt a…

20 -

Lil'Log (Lilian Weng) research 72mo ago

Exploration Strategies in Deep Reinforcement Learning

[Updated on 2020-06-17: Add “exploration via disagreement” in the “Forward Dynamics” section . Exploitation versus exploration is a critical topic in Reinforcement Learning. We’d like the RL agent to find the best solution as fast as possible.…

27 -

Lil'Log (Lilian Weng) research 74mo ago

The Transformer Family

[Updated on 2023-01-27 : After almost three years, I did a big refactoring update of this post to incorporate a bunch of new Transformer models since 2020. The enhanced version of this post is here: The Transformer Family Version 2.0 . Please refer to that post on this topic.]

22 -

-

Lil'Log (Lilian Weng) research 79mo ago

Self-Supervised Representation Learning

[Updated on 2020-01-09: add a new section on Contrastive Predictive Coding ]. [Updated on 2020-04-13: add a “Momentum Contrast” section on MoCo, SimCLR and CURL.] [Updated on 2020-07-08: add a “Bisimulation” section on DeepMDP and DBC.] [Updated on…

5 -

Lil'Log (Lilian Weng) research 81mo ago

Evolution Strategies

Stochastic gradient descent is a universal choice for optimizing deep learning models. However, it is not the only option. With black-box optimization algorithms, you can evaluate a target function $f(x): \mathbb{R}^n \to \mathbb{R}$, even when you don’t know the precise…

11 -

Lil'Log (Lilian Weng) research 83mo ago

Meta Reinforcement Learning

In my earlier post on meta-learning , the problem is mainly defined in the context of few-shot classification. Here I would like to explore more into cases when we try to “meta-learn” Reinforcement Learning (RL) tasks by developing an agent that can solve unseen…

12 -

Lil'Log (Lilian Weng) research 85mo ago

Domain Randomization for Sim2Real Transfer

In Robotics, one of the hardest problems is how to make your model transfer to the real world. Due to the sample inefficiency of deep RL algorithms and the cost of data collection on real robots, we often need to train models in a simulator which theoretically provides an…

9 -

Lil'Log (Lilian Weng) research 87mo ago

Are Deep Neural Networks Dramatically Overfitted?

[Updated on 2019-05-27: add the section on Lottery Ticket Hypothesis.] If you are like me, entering into the field of deep learning with experience in traditional machine learning, you may often ponder over this question: Since a typical deep neural network has so many…

38 -

Lil'Log (Lilian Weng) research 88mo ago

Generalized Language Models

[Updated on 2019-02-14: add ULMFiT and GPT-2 .] [Updated on 2020-02-29: add ALBERT .] [Updated on 2020-10-25: add RoBERTa .] [Updated on 2020-12-13: add T5 .] [Updated on 2020-12-30: add GPT-3 .] [Updated on 2021-11-13: add XLNet , BART and ELECTRA ; Also updated the Summary…

6 -

Lil'Log (Lilian Weng) research 89mo ago

Object Detection Part 4: Fast Detection Models

In Part 3 , we have reviewed models in the R-CNN family. All of them are region-based object detection algorithms. They can achieve high accuracy but could be too slow for certain applications such as autonomous driving. In Part 4, we only focus on fast object detection models,…

17 -

Lil'Log (Lilian Weng) research 90mo ago

Meta-Learning: Learning to Learn Fast

[Updated on 2019-10-01: thanks to Tianhao, we have this post translated in Chinese !]

4 -

Lil'Log (Lilian Weng) research 92mo ago

Flow-based Deep Generative Models

So far, I’ve written about two types of generative models, GAN and VAE . Neither of them explicitly learns the probability density function of real data, $p(\mathbf{x})$ (where $\mathbf{x} \in \mathcal{D}$) — because it is really hard! Taking the generative model…

38 -

Lil'Log (Lilian Weng) research 94mo ago

From Autoencoder to Beta-VAE

[Updated on 2019-07-18: add a section on VQ-VAE & VQ-VAE-2 .] [Updated on 2019-07-26: add a section on TD-VAE .] Autocoder is invented to reconstruct high-dimensional data using a neural network model with a narrow bottleneck layer in the middle (oops, this is probably not true…

24 -

Lil'Log (Lilian Weng) research 96mo ago

Attention? Attention!

[Updated on 2018-10-28: Add Pointer Network and the link to my implementation of Transformer.] [Updated on 2018-11-06: Add a link to the implementation of Transformer model.] [Updated on 2018-11-18: Add Neural Turing Machines .] [Updated on 2019-07-18: Correct the mistake on…

18 -

Lil'Log (Lilian Weng) research 97mo ago

Implementing Deep Reinforcement Learning Models with Tensorflow + OpenAI Gym

The full implementation is available in lilianweng/deep-reinforcement-learning-gym In the previous two posts, I have introduced the algorithms of many deep reinforcement learning models. Now it is the time to get our hands dirty and practice how to implement the models in the…

6 -

Lil'Log (Lilian Weng) research 98mo ago

Policy Gradient Algorithms

[Updated on 2018-06-30: add two new policy gradient methods, SAC and D4PG .] [Updated on 2018-09-30: add a new policy gradient method, TD3 .] [Updated on 2019-02-09: add SAC with automatically adjusted temperature ]. [Updated on 2019-06-26: Thanks to Chanseok, we have a version…

20 -

-

Lil'Log (Lilian Weng) research 101mo ago

The Multi-Armed Bandit Problem and Its Solutions

The algorithms are implemented for Bernoulli bandit in lilianweng/multi-armed-bandit . Exploitation vs Exploration The exploration vs exploitation dilemma exists in many aspects of our life. Say, your favorite restaurant is right around the corner. If you go there every day, you…

5 -

Lil'Log (Lilian Weng) research 101mo ago

Object Detection for Dummies Part 3: R-CNN Family

[Updated on 2018-12-20: Remove YOLO here. Part 4 will cover multiple fast object detection algorithms, including YOLO.] [Updated on 2018-12-27: Add bbox regression and tricks sections for R-CNN.] In the series of “Object Detection for Dummies”, we started with basic…

6 -

Lil'Log (Lilian Weng) research 102mo ago

Object Detection for Dummies Part 2: CNN, DPM and Overfeat

Part 1 of the “Object Detection for Dummies” series introduced: (1) the concept of image gradient vector and how HOG algorithm summarizes the information across all the gradient vectors in one image; (2) how the image segmentation algorithm works to detect regions…

4 -

Lil'Log (Lilian Weng) research 103mo ago

Object Detection for Dummies Part 1: Gradient Vector, HOG, and SS

I’ve never worked in the field of computer vision and has no idea how the magic could work when an autonomous car is configured to tell apart a stop sign from a pedestrian in a red hat. To motivate myself to look into the maths behind object recognition and detection…

29 -

Lil'Log (Lilian Weng) research 104mo ago

Learning Word Embedding

Human vocabulary comes in free text. In order to make a machine learning model understand and process the natural language, we need to transform the free-text words into numeric values. One of the simplest transformation approaches is to do a one-hot encoding in which each…

20 -

Lil'Log (Lilian Weng) research 104mo ago

Anatomize Deep Learning with Information Theory

Professor Naftali Tishby passed away in 2021. Hope the post can introduce his cool idea of information bottleneck to more people. Recently I watched the talk “Information Theory in Deep Learning” by Prof Naftali Tishby and found it very interesting. He presented how…

33 -

Lil'Log (Lilian Weng) research 106mo ago

From GAN to WGAN

[Updated on 2018-09-30: thanks to Yoonju, we have this post translated in Korean !] [Updated on 2019-04-18: this post is also available on arXiv .] Generative adversarial network (GAN) has shown great results in many generative tasks to replicate the real-world rich content such…

4 -

Lil'Log (Lilian Weng) research 106mo ago

How to Explain the Prediction of a Machine Learning Model?

The machine learning models have started penetrating into critical areas like health care, justice systems, and financial industry. Thus to figure out how the models make the decisions and make sure the decisioning process is aligned with the ethnic requirements or legal…

22 -

Lil'Log (Lilian Weng) research 107mo ago

Predict Stock Prices Using RNN: Part 2

In the Part 2 tutorial, I would like to continue the topic on stock price prediction and to endow the recurrent neural network that I have built in Part 1 with the capability of responding to multiple stocks. In order to distinguish the patterns associated with different price…

37 -

Lil'Log (Lilian Weng) research 107mo ago

Predict Stock Prices Using RNN: Part 1

This is a tutorial for how to build a recurrent neural network using Tensorflow to predict stock market prices. The full working code is available in github.com/lilianweng/stock-rnn . If you don’t know what is recurrent neural network or LSTM cell, feel free to check my…

13 -

Lil'Log (Lilian Weng) research 108mo ago

An Overview of Deep Learning for Curious People

(The post was originated from my talk for WiMLDS x Fintech meetup hosted by Affirm .) I believe many of you have watched or heard of the games between AlphaGo and professional Go player Lee Sedol in 2016. Lee has the highest rank of nine dan and many world championships. No…

17 -

Lil'Log (Lilian Weng) research 308mo ago

FAQ

Q: How can I get an update when a new post comes out? A: I post about my new post on this Twitter @lilianweng account. There is also a RSS feed . Q: What tool do you use for plotting? A: I'm using Google Presentation (cloud version of PowerPoint). Q: What if I see something…

5