How This Small Startup Achieved a Near-Perfect Record Against AI Slop

Mirrored from The Algorithmic Bridge for archival readability. Support the source by reading on the original site.

Hey, Alberto here! 👋 Each week, I publish long-form AI analysis covering culture, philosophy, and business for The Algorithmic Bridge. Paid subscribers also get Monday how-to guides and Friday news commentary. I publish occasional extra articles. If you’d like to become a paid subscriber, here’s a button for that:

Full disclosure: This is not a paid sponsorship.

I.

The first thing you notice about AI slop is that it reads like standard online writing.

It is, in this sense, the latest of a long series of pollutants that are indistinguishable from the thing they pollute, including counterfeit currency, adulterated food, propaganda, and short-form TikTok entertainment videos passing as educational content.

Unlike the others, however, AI slop is hard to distinguish but not really hard to detect.

It’s important to stop on this subtlety for a moment: distinguishing means isolating something from its surroundings, whereas detecting means knowing something is there at all. A detector is unconcerned with what extra things might be there besides the target thing.

AI slop is easy to detect and thus, exterminate. Any standard classifier can do it. I can do it. You could do it if only you tried. If anything, AI slop conquered the internet because detectors were too good at catching machines. So good, in fact, that they’d also catch humans. Therein lies the problem: human writing is that extra thing that detectors catch in their large-scale drift nets while they remain, unfortunately, unbothered by their indiscriminate actions.

Everyone knows that catching a machine on purpose is easy; what almost nobody knows is how hard it is not to catch a human by mistake. The entire story of AI detectors is the story of how they want to be, instead, AI distinguishers. Or, failing that, where they accept a trade-off between. Indeed, AI slop grew and settled as a digital mold would; in the absence of something that could separate it, despite everyone knowing it was there.

Until now. Enter Pangram Labs.

The best way to understand Pangram’s approach to AI slop—crucially, what separates it from most other AI detection tools—is through Voltaire’s adage: perfect is the enemy of good.

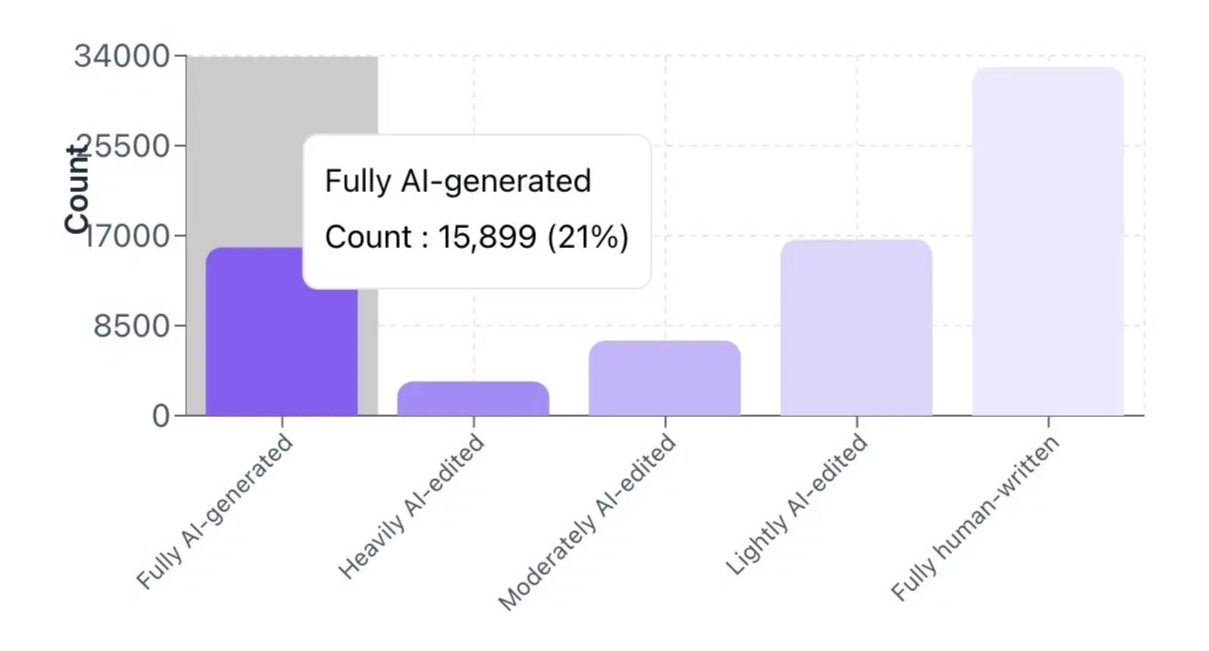

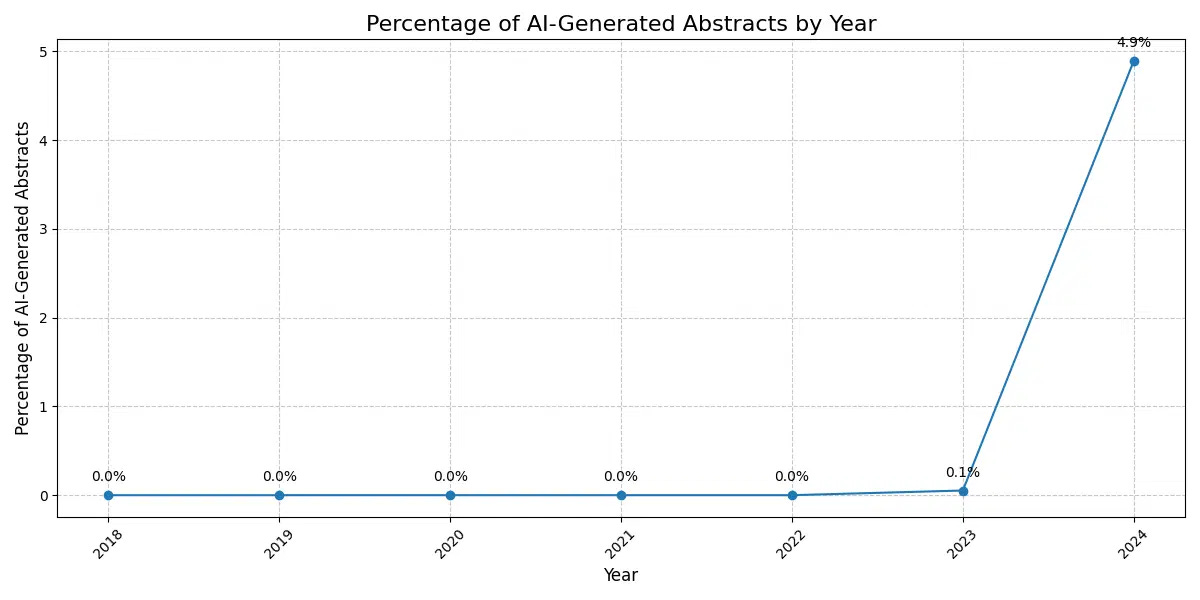

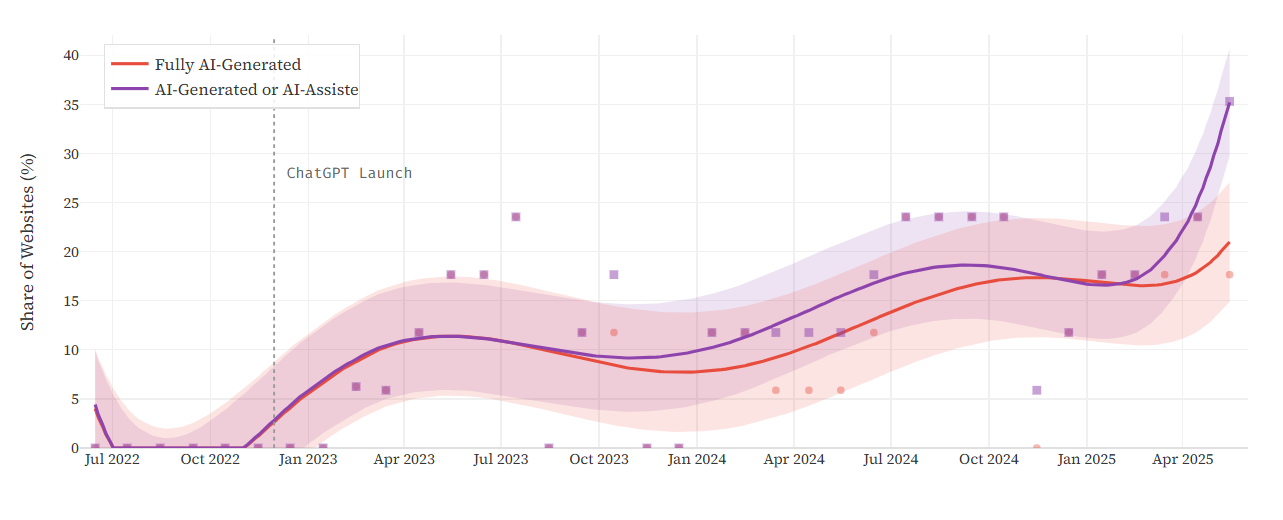

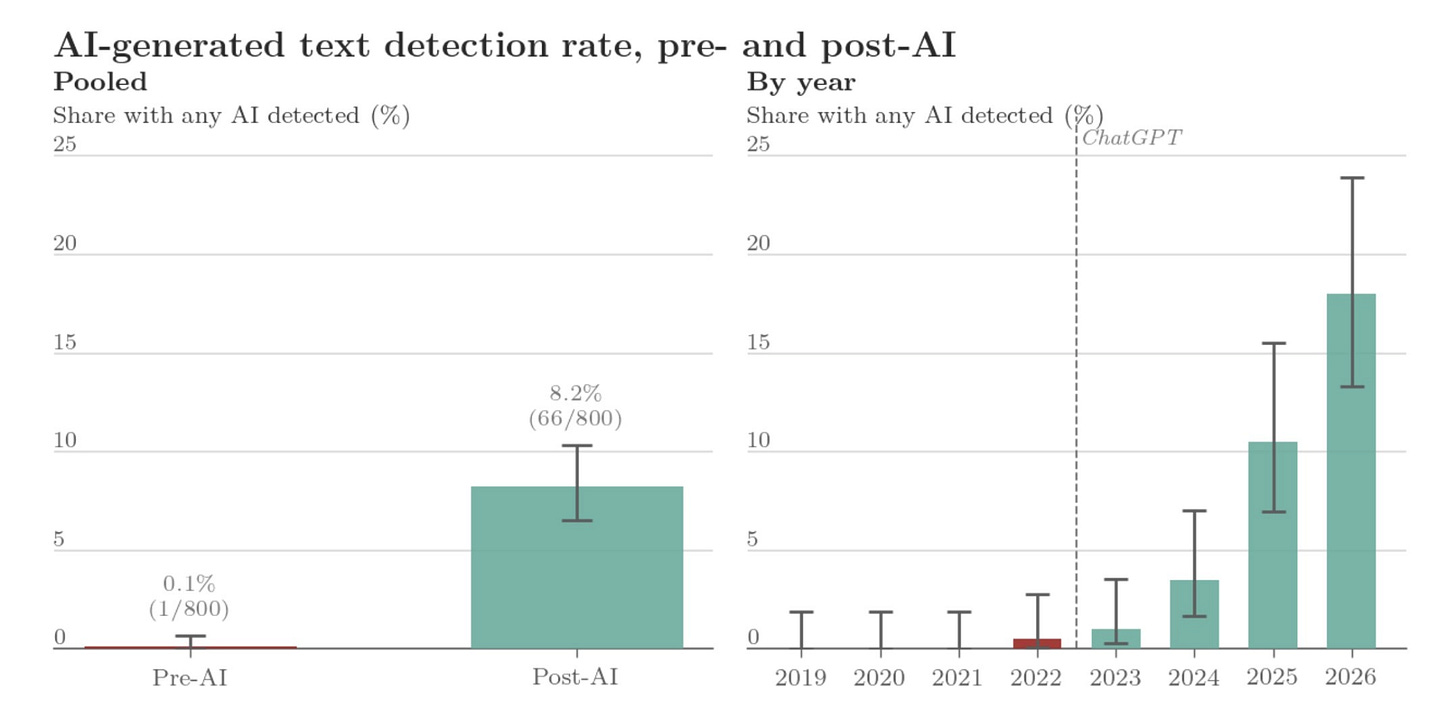

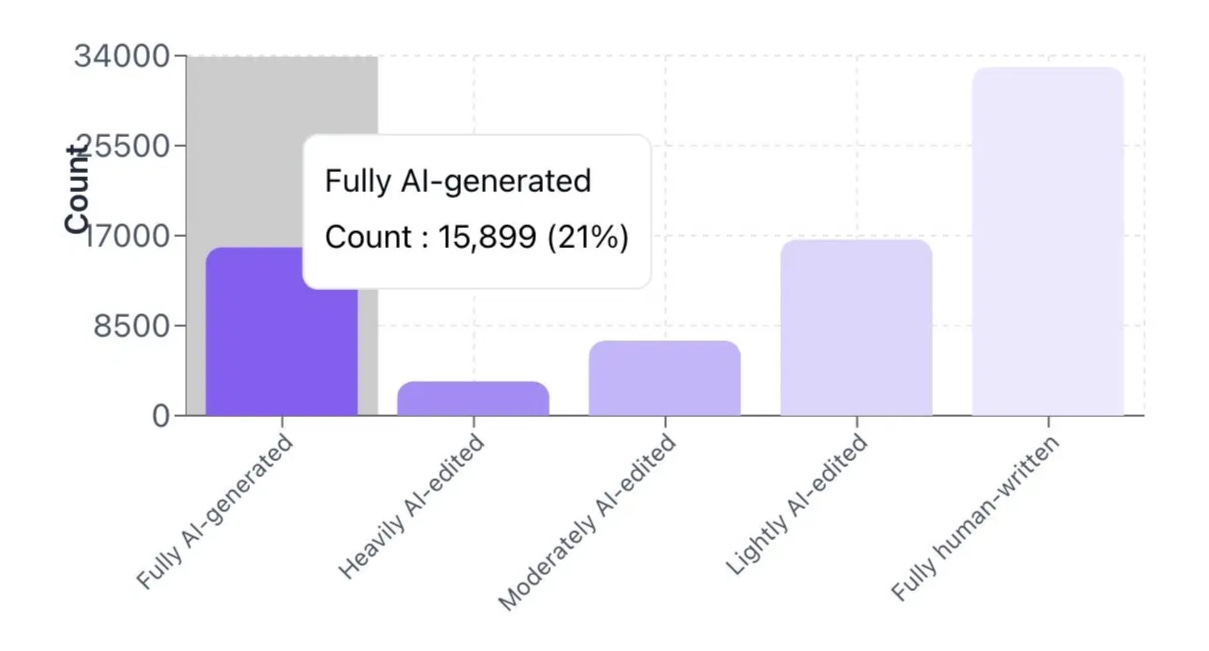

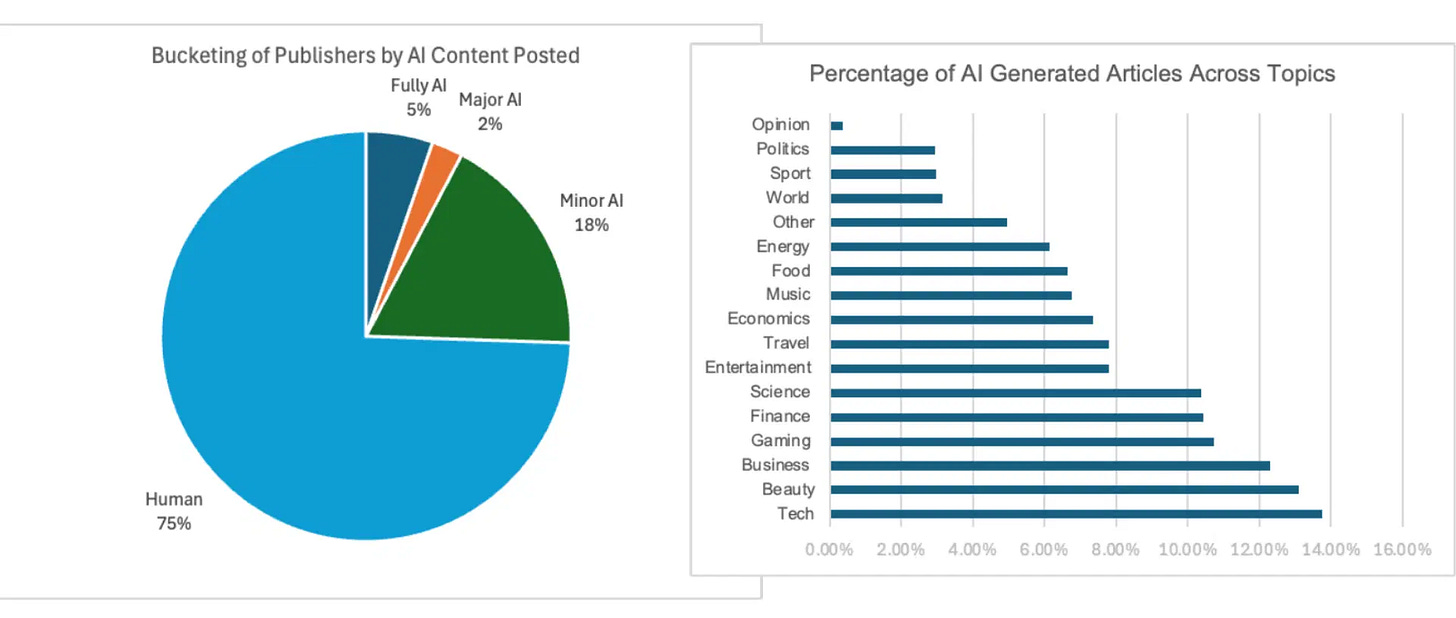

Instead of trying to wage a full-blown war against AI by detecting perfectly what is and what isn’t slop, they cleverly chose to maximize their odds on the most important battle: how to ensure that everything they catch is, actually, an AI. Or, to use the technical term, they wanted to achieve a near-zero “false positive rate” (FPR). You can see why that’s valuable by looking at these charts:

With Pangram, you can be almost 100% sure what fraction of “post-AI” content is AI-generated because Pangram doesn’t fail with “pre-AI” content at all.

This is what allows Pangram to make claims like: “21%, or 15,899 reviews [at ICLR 2026], were fully AI-generated [and] over half of the reviews had some form of AI involvement, either AI editing, assistance, or full AI-generation.” Or: “3% of the total [front-page product Amazon] reviews studied . . . were AI-generated with high confidence.” Or that, in August 2024, “60,000 AI-generated news articles” were published daily (imagine what the number is right now).

I should note that Pangram’s FPR is very low but still not zero. They claim it’s 1 in 10,000 “on test set documents” and 1 in 100,000 “on held-out scientific papers from ArXiV.”

They’ve come a long way since those posts mocking AI detectors flagging the US Constitution or some verse from The Bible as AI-written. That kind of preposterousness is what Pangram has managed to avoid. They adhere, even if it’s not clear when you read their comms, to William Blackstone’s 1765 principle, which rules all modern law systems: it’s better for 10 guilty people to escape than for 1 innocent person to suffer.

I’ve defended this idea before in the exact sense that Pangram embodies. I find that’s the better approach: do everything in your power to avoid punishing those who try to play fair in a world giving them every reason to be corrupt. And yet, until now, I’ve watched sophisticated detectors be defeated on the war against AI slop by trying to win every battle: it’s really hard to have a zero error rate of mistaking something a human made as AI (false positive) while also having a zero error rate of mistaking something made with AI as human (false negative).

If you try to do both, you will do none. You have to compromise. Pangram’s decisive compromise is, as I see it, the first successful offensive in humanity’s reconquest of the web.

II.

But wait, Pangram also claims a near-zero false negative rate (FNR), the rate at which AI writing escapes detection. Independent researchers at UChicago have confirmed it. It seems that Pangram ended the war! Is that what a trade-off looks like? Let’s try to understand what’s going on.

This means, on the surface, that Pangram is going after the whole pie like every other AI detection company. It seems to complicate the neat story I just told you about winning the battle to not lose the war, and the importance of compromise. Overselling the Blackstone tradeoff would make me guilty of the same laziness I’ve been criticizing in the marketing of other AI detection companies.

The problem with this is that detectors cannot achieve near-zero true FNR. Whereas FPR is always true—you can be sure that a detection tool works by going back into the past where AI didn’t exist—with FNR, you need to do some tricks that weaken your ability to flag everything that’s AI.

When Pangram—and the researchers who validate them—measure false negatives, they do it the only way they can: they generate text with AI models, maybe use a limited “AI humanizer,” run it through the detector, and count the misses. Against this benchmark, Pangram’s false negative rate is indeed near zero, which can be used for public relation goals and in papers.

The problem is that such a measurement tells us very little about the real world: The distribution of AI presence in a controlled experiment in a lab doesn’t necessarily have any resemblance to the distribution of AI presence in the real world. Instead of accepting that measuring FNR in the wild is near impossible, they settle for a weak definition of FNR and thus a weak claim. That’s ok—AI detectors can’t really do better—but it’s a very different claim than saying: we never fail to flag either AIs or humans. That’s not true when you don’t know how AI is used in the wild!

For instance—allow me this anecdotal data point—I routinely fool Pangram.

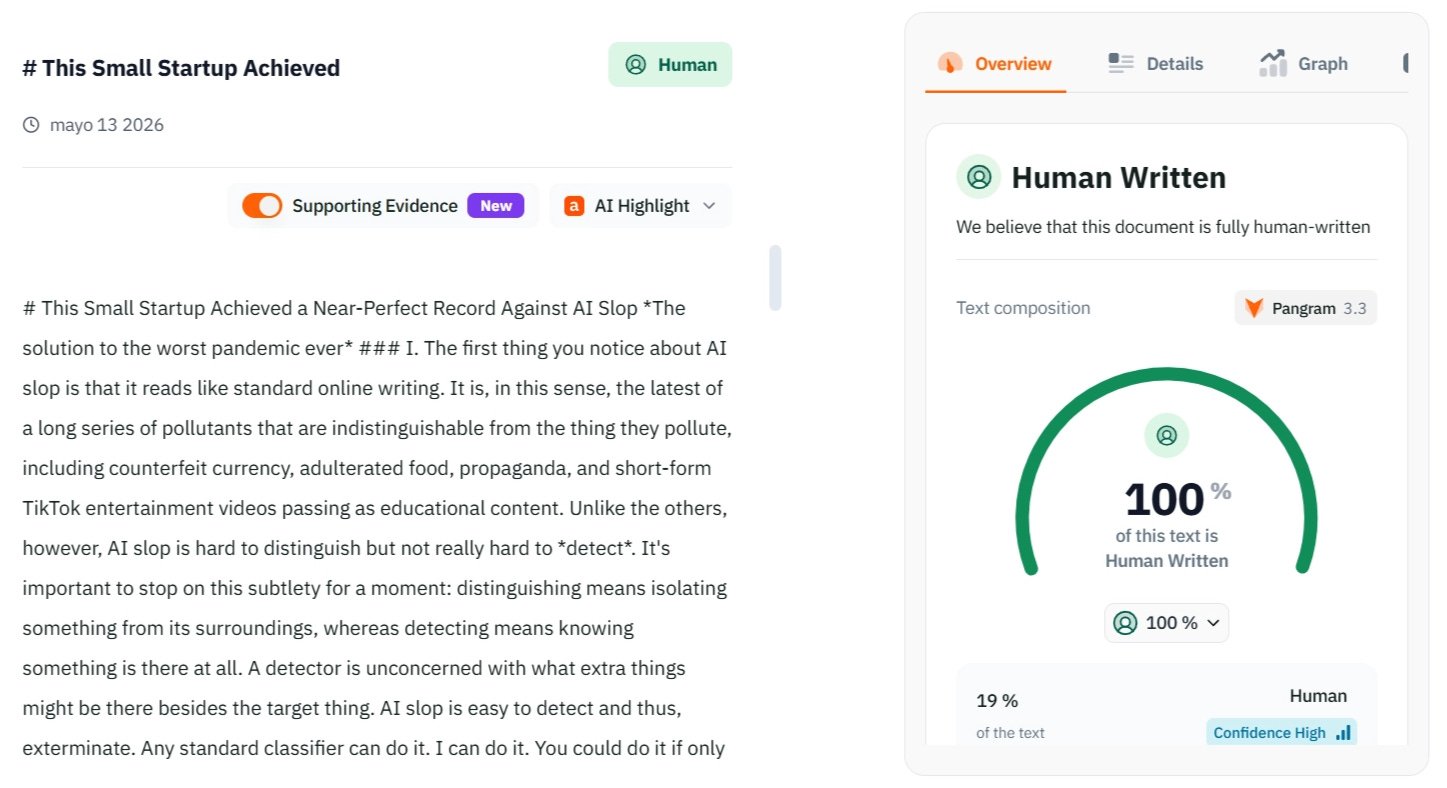

I fool every detector, to be clear. I do it easily, with no special tools, humanizer software, or careful adversarial tricks. I just imbue some parts of the text with my style, add a few words or rewrite a couple of sentences and let AI write the rest. This is less about “changing X words” and more about having a developed “sense of smell” of how to fool these tools. Sometimes I write 10% and AI writes 90% and the result is ok. Sometimes it’s the other way around. I do this for experimental writing where I want to test the limits of AI. But I also do this in private to corroborate that the measured FNR is not useful. Every time, Pangram concedes: 100% Human Written.

My claim is not that I know how to fool AI detectors, but that any competent writer who tries will. Indeed, I suspect that most people will try; I suspect that’s the correct way to think about what happens with AI-generated text in practice. Few people are so lazy—except perhaps LinkedIn influencers—as to automate the entire pipeline and not check for obvious structural and syntactic tells everyone knows (e.g., “It’s not X, it’s Y, triads, throat clearing transitions, etc.).

That’s precisely what FNR benchmarks consistently miss. They test pure AI text against pure human text; two categories that, increasingly, nobody operates in. There are as many ways to blend AI into your writing process as there are writers; the real world is a gradient.

You don’t get to claim the hard victory when your tool can’t measure the hard cases. To claim near-zero true false negatives in these conditions is like claiming you’ve caught every target fish in a lake with your large-scale drift net when your evidence is that you’ve caught every target fish you put there yourself.

This limitation is what makes Pangram more valuable rather than less so.

I will forgive their PR team from missing the mark on FNRs because in practice, Pangram chooses to optimize for the battle where victory is verifiable. They know what aspect of AI detection is doing the heavy lifting and act accordingly. Pangram will actually lean on “human-written” when unsure. That’s the right engineering decision and, I’d argue, the right ethical one too. That’s also the real win: not so much a zero score on both FPR and FNR, as having achieved the lowest FPR.

(This section was ~50% written by Claude with a few edits on my end; Pangram’s verdict on this article, which I share at the end, will tell you how much weight to put on those FNR numbers.)

III.

I’ve criticized AI detection tools before. I’ve also criticized a naive “AI;DR” approach—it’s AI, so I didn’t read it—by which people assume they’re good enough at distinguishing AI so that they can decide with a glimpse. Those who’ve read me for a while know that I’m skeptical about our ability to do anything of lasting effect to prevent a total AI-induced destruction of the digital commons.

Well, I might have been right in the specifics but I was wrong in my conclusion. There is hope.

The reason Pangram is different is that they allow us, with a really high confidence, to sort out AI slop. If you trust the tool—I do—you can be sure that, when it flags something as AI-generated, it is AI-generated. This removes the main reason why the other detectors were worse than nothing: the liar’s dividend. No one can hide under the pretext that “AI detectors often flag as AI what’s human-written.” To this end, they’ve just announced the next step: a Chrome extension that works across platforms (X, Substack, Medium, LinkedIn, Reddit, etc.).

This is, essentially, humanity delaying a definitive but dubious victory against AI slop in exchange for winning with certainty the most urgent battle; a tradeoff I’ll take every time. By virtue of being good, Pangram is not perfect (which is the other side of Voltaire’s maxim) and that’s ok. Is it so terrible that some guilty individuals will escape our judgment? No, when you consider that not pursuing them ensures that almost no innocent people are harmed.

Besides, you can always trust your instincts. You may have heuristics, rules of thumb that work in distinguishing cues and signs that Pangram has not encoded. That’s ok, use them; we not perfect detectors of true FNR but, with enough training, definitely better than Pangram is. You should not blindly trust that something is human-made just because Pangram says so.

That said, I don’t support a witch hunt. I understand the hate and the frustration because we’re all drowning in AI slop, but public shame and cancellation are something that’s better left behind. This article is less a condemnation of AI writing itself and the people who engage with it, and more a defense of individual power to decide what you want your info diet to be made of.

The stance I support is a sort of “functional” AI;DR. Whereas AI;DR is a blanket rejection, a functional version combines the use of the best AI detectors and your natural skills. In fact, not only do I support this, but I encourage you to do it: the only way to clean up the entire digital town is for each of us to clean the sidewalk in front of our own digital homes. Take local action to see global results.

Hey, Pangram, how did I do?

Discussion (0)

Sign in to join the discussion. Free account, 30 seconds — email code or GitHub.

Sign in →No comments yet. Sign in and be the first to say something.